Types of Software Testing: A Complete Classification

3. By Testing Level: The Test Pyramid

Testing levels describe the scope of what is being tested. Mike Cohn's test pyramid remains the most useful mental model: a large base of fast, low-level tests, a smaller layer of integration tests, and a thin top layer of end-to-end tests.

Unit Testing

Tests a single function, method, or class in isolation. Dependencies are mocked or stubbed. Unit tests run in milliseconds, are written by developers as they code, and form the foundation of the test pyramid. A typical codebase has thousands of them and they run on every commit.

Integration Testing

Tests how multiple units work together. API contract tests, database integration tests, and tests that exercise interactions between services all sit here. Integration tests are slower than unit tests - seconds instead of milliseconds - and catch a different class of defects: wrong assumptions about how components communicate.

System Testing

Tests the entire application as a complete, integrated system. Features are exercised end-to-end against a production-like environment. System testing validates that the assembled product works, not just that individual pieces work in isolation.

Acceptance Testing

Validates whether the software meets business requirements and is ready for release. Acceptance testing comes in several forms: user acceptance testing (UAT) done by business users, alpha testing by internal staff, and beta testing by a subset of real customers. The question answered here is not "does it work?" but "is this what was actually needed?"

4. By Purpose: Functional vs Non-functional

The most common classification splits testing by purpose. Functional testing asks "does the system do the right thing?" Non-functional testing asks "does it do it well enough?"

Functional Testing Types

Functional testing verifies that each feature works according to its specification. These types show up in almost every QA plan:

- Smoke testing. A shallow, broad check that the critical functionality works before deeper testing begins. If smoke tests fail, the build is rejected and no further testing happens. A smoke suite typically runs in minutes.

- Sanity testing. A narrow, deep check of a specific area after a minor change or bug fix. Smoke covers the whole system shallowly; sanity covers one slice of it deeply. See our smoke vs sanity testing guide for the full distinction.

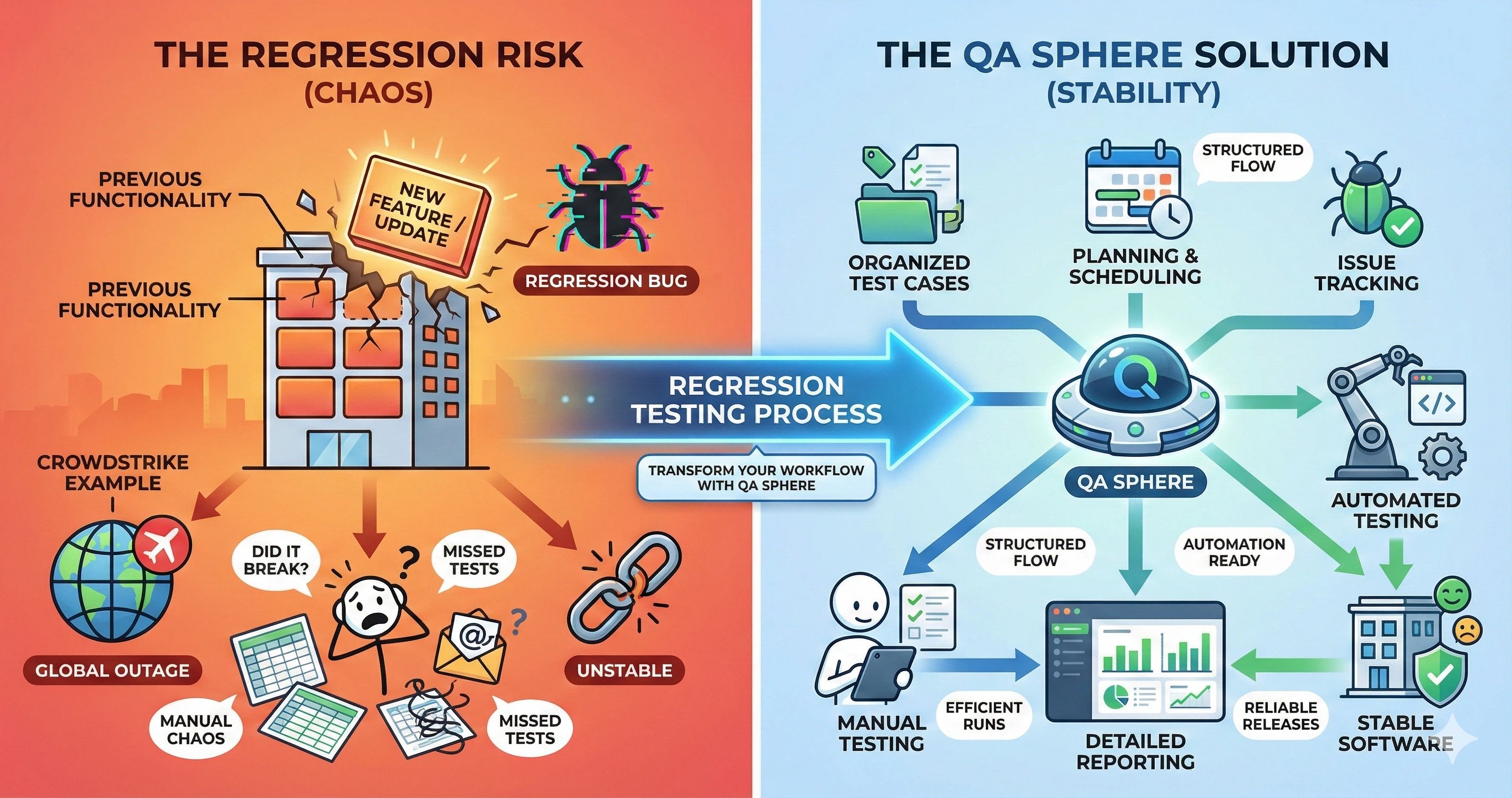

- Regression testing. Re-runs previously passing tests after changes to verify nothing broke. The regression suite grows with the product and is the primary candidate for automation. Our regression testing guide covers how to build one that stays maintainable.

- Functional acceptance testing. Verifies that user-facing features meet documented acceptance criteria, typically driven by user stories in agile teams.

Non-functional Testing Types

Non-functional testing checks qualities that matter to users but are not tied to a specific feature: speed, reliability, security, accessibility. A product can pass every functional test and still fail in production if non-functional requirements are ignored.

- Performance testing. An umbrella for several sub-types: load testing verifies behavior under expected traffic, stress testing pushes the system past its limits to find the breaking point, spike testing checks response to sudden traffic surges, volume testing uses large datasets, and endurance testing runs the system under load for hours or days to find memory leaks and slow degradation.

- Security testing. Finds vulnerabilities before attackers do. Includes vulnerability scanning, penetration testing, authentication and authorization checks, and compliance validation against standards like OWASP Top 10, SOC 2, or HIPAA.

- Usability testing. Evaluates how easy the product is to use. Typically done with real users - or representative surrogates - performing realistic tasks while observers record friction points.

- Compatibility testing. Verifies the product works across the required matrix of browsers, operating systems, devices, and screen sizes. Particularly important for web and mobile applications.

- Accessibility testing. Checks conformance to accessibility standards like WCAG 2.1 - keyboard navigation, screen reader support, color contrast, focus management. Increasingly a legal requirement, not just a nice-to-have.

- Reliability testing. Measures how often the system fails and how well it recovers. Mean time between failures (MTBF) and mean time to recovery (MTTR) are the common metrics.

- Scalability testing. Measures how the system handles growth - more users, more data, more transactions. Overlaps with performance testing but focuses on trajectory rather than absolute numbers.

Other Testing Types Worth Knowing

A few additional types do not fit cleanly into the four axes but appear often enough to be worth naming:

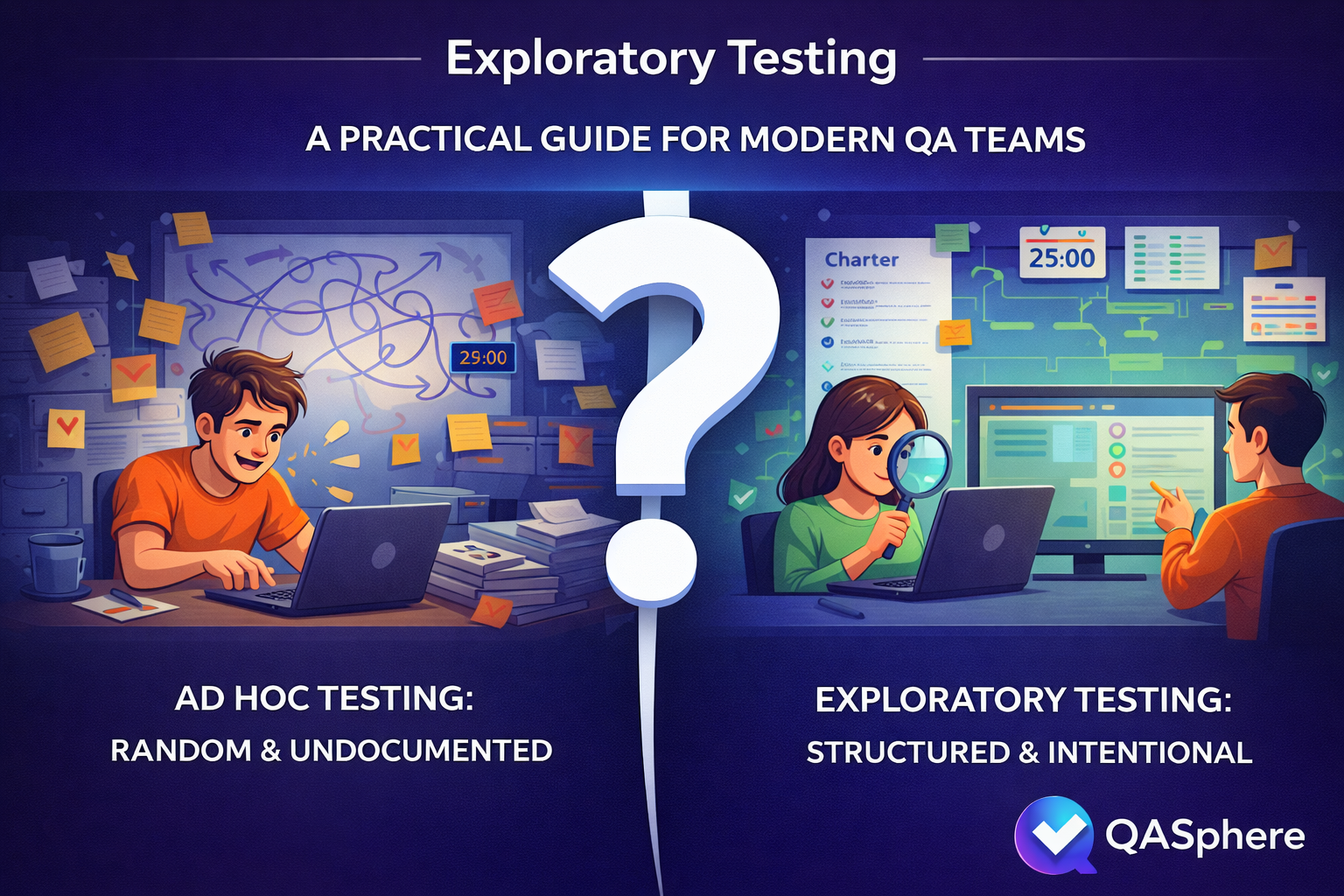

- Exploratory testing. Unscripted, investigative testing where the tester simultaneously designs and executes tests based on what they observe. Exploratory testing finds the defects that scripted tests miss because it follows curiosity rather than a pre-written path. See our exploratory testing guide for techniques and session management.

- Ad-hoc testing. Informal, unplanned testing with no documentation or structure. Useful for quick sanity checks but not a substitute for planned testing - results are hard to reproduce and easy to lose.

- A/B testing. Two versions of a feature are shown to different user segments to measure which performs better. More a product analytics technique than QA testing, but the QA team is often involved in setup and validation.

- Localization testing. Verifies the product works correctly in different languages, locales, and regions - date formats, currencies, right-to-left layouts, translated strings, regional regulations.

- Recovery testing. Forces failures - network drops, server crashes, disk full - and verifies the system recovers cleanly.

Written by

QA Sphere TeamThe QA Sphere team shares insights on software testing, quality assurance best practices, and test management strategies drawn from years of industry experience.