Exploratory Testing: A Practical Guide for Modern QA Teams

Exploratory testing is where skilled testers find the problems no checklist warned them about.

It happens in the messy space between what the team expected and what the product actually does. A screen feels inconsistent. A workflow breaks only after three "impossible" user actions in a row. An integration technically works, but only if the timing is perfect and the data is clean. Scripted tests rarely catch those problems first. Exploratory testing often does.

That does not make exploratory testing random or unstructured. Good exploratory testing is disciplined, intentional, and documented. The tester is learning, designing, and executing tests at the same time, then turning what they learn into actionable defects, better coverage, and stronger regression suites.

In this guide we will walk through what exploratory testing is, when to use it, and how to run it effectively. Along the way we will follow a single example from charter to debrief so you can see how the pieces fit together in practice.

What Is Exploratory Testing?

The term "exploratory testing" was coined by Cem Kaner in the mid-1980s and first appeared in print in his book Testing Computer Software (1988). Kaner described it as a style of skilled, multidisciplinary testing that was already common in Silicon Valley — he gave the practice a name, not an invention.

At its core, exploratory testing is a testing approach where the tester investigates the product in real time, using each observation to decide what to test next. Instead of following a rigid script from top to bottom, the tester starts with a mission, explores the product deliberately, and adapts as new information appears.

That makes exploratory testing especially effective for:

- New features that have not settled yet

- Areas with unclear requirements or frequent changes

- Complex workflows with many state transitions

- Edge cases that are hard to predict in advance

- UI behavior, integrations, and real-world user paths

Exploratory testing is not a replacement for scripted testing or automation. It complements both. Scripted tests are excellent when you need repeatability. Exploratory testing is excellent when you need discovery.

If you already use both manual and automation testing, exploratory work is often the bridge between them. It helps teams discover risky behavior first, then decide what deserves a permanent test case or automated coverage later.

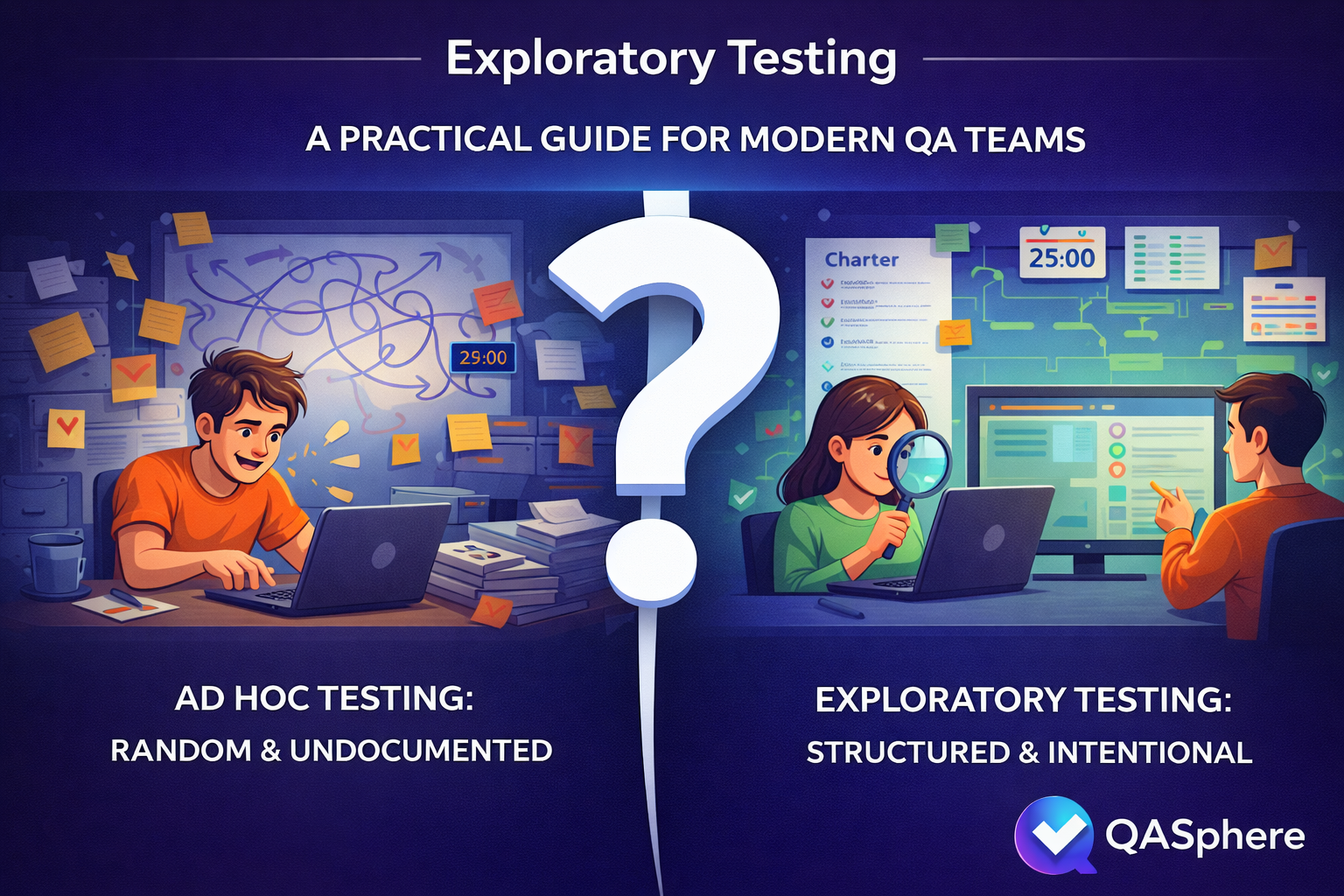

Exploratory Testing vs. Ad Hoc Testing

Teams often confuse exploratory testing with ad hoc testing. They are not the same.

| Ad hoc testing | Exploratory testing | |

|---|---|---|

| Goal | None defined | Clear objective tied to a risk or feature |

| Scope | Whatever catches attention | Focused area, defined in a charter |

| Time | Open-ended | Time-boxed sessions |

| Evidence | Rarely captured | Notes, observations, linked defects |

| Follow-up | Usually none | Debrief, new test cases, regression candidates |

| Repeatability | Hard to explain or reproduce | Session structure makes it reviewable |

The difference matters. Random clicking may find a bug. Exploratory testing builds knowledge.

When Should You Use Exploratory Testing?

Exploratory testing is most valuable when the product or the team needs learning, not just confirmation.

1. Right after a new feature lands

Requirements may look complete on paper while the real behavior is still rough. Exploratory testing helps you evaluate whether the implementation feels coherent in practice, not just whether it technically matches a checklist.

2. Before a release in risky areas

If a release touches authentication, payments, permissions, notifications, or integrations, exploratory sessions are often a fast way to expose high-impact gaps before they reach production.

3. When requirements are incomplete or ambiguous

If the expected behavior is not fully defined, exploratory testing helps surface the questions the team failed to ask earlier.

4. After bug fixes

Some fixes solve the immediate issue but create new inconsistencies nearby. Exploratory testing is useful for probing the surrounding area instead of re-checking only the original defect path.

5. When you need to understand user experience, not just correctness

A workflow can be "working" and still feel confusing, fragile, or easy to misuse. Exploratory testing is one of the best ways to assess that gap.

How to Run Exploratory Testing Well

The simplest way to make exploratory testing effective is to give it enough structure to stay focused without turning it into a fully scripted test. We will use a running example — password reset on mobile — to show how each step works in practice.

Start with a narrow charter

Your charter is the mission for the session. Keep it specific.

Good charter:

- Explore password reset on mobile after link expiration and interrupted network conditions

Weak charter:

- Test authentication

A practical charter usually answers four questions:

- What area are we exploring?

- What setup or data do we need?

- What risks are we probing?

- What are we trying to learn?

Here is the charter for our example:

Charter: Explore password reset behavior for expired links on mobile

Setup: Staging environment, test inbox, slow network profile, existing user with known credentials

Focus: Error handling, redirect behavior, token expiration, repeated attempts

Goal: Discover broken state transitions, unclear messaging, and recovery gaps

Use a timebox

Exploratory testing works better when sessions are intentionally limited. James and Jonathan Bach formalized this idea with Session-Based Test Management (SBTM), where the core unit of work is a "session" — an uninterrupted block of chartered test effort. A useful session length is typically thirty to ninety minutes: long enough to investigate meaningfully, short enough to stay sharp.

For our password reset charter, we might plan two 45-minute sessions. The first session focuses on expired link handling and error messages. The second session focuses on repeated reset attempts and what happens when the network drops mid-flow.

If a session needs several hours, the scope is probably too broad. Split it into smaller charters.

Take notes while you test

Do not trust memory. As you work through the session, capture:

- What you tried

- What data or environment you used

- What seemed wrong or surprising

- What defect or question was created

- What deserves follow-up coverage later

Here is what notes from the first password reset session might look like:

Session 1 — Expired link handling (45 min)

[0:00] Started on staging, iOS Safari. Requested reset for [email protected].

[0:04] Clicked link immediately — works fine. New password accepted.

[0:08] Requested new link, waited 11 minutes for token to expire.

[0:09] Clicked expired link — got HTTP 200 with the reset form instead of an error.

Form renders, accepts input, then fails silently on submit. No error shown.

** BUG: Expired token serves the form instead of rejecting at the redirect **

[0:18] Tried requesting 5 reset links in quick succession.

All 5 arrive. Only the last one should be valid, but link #3 also worked.

** BUG: Previous tokens not invalidated when a new one is issued **

[0:30] Toggled airplane mode right after tapping submit on new password.

Spinner runs forever. No timeout, no retry prompt, no offline message.

** OBSERVATION: No network error handling on the reset confirmation screen **

[0:40] Attempted reset with mixed-case email. Received "user not found."

** QUESTION: Is email matching case-sensitive? Check with dev. **

[0:45] End of session.

Summary: 2 bugs, 1 UX gap, 1 question for the team.

The point is not perfect documentation. The point is preserving the learning so the debrief has something concrete to work with.

End with a debrief

A good session should produce something useful even if it finds no bugs. Debrief the session and decide:

- Did we learn enough, or do we need another session?

- Should any findings become formal test cases?

- Should any path be added to regression coverage?

- Are there requirements or assumptions that need clarification?

For our password reset example, the debrief might produce three outcomes: the expired-token bug gets filed immediately as a high-priority defect, the token-invalidation bug gets linked to the authentication epic, and "test reset flow under poor network conditions" gets added as a permanent regression case because no one had thought to cover it before.

This is where exploratory testing stops being individual intuition and becomes team knowledge.

Common Exploratory Testing Mistakes

Even experienced teams get exploratory testing wrong. Here are the most common mistakes and what they look like in practice.

Making the scope too broad

"Explore checkout" is too wide. A tester spends ninety minutes bouncing between cart, payment, shipping, promo codes, and guest checkout without going deep on anything. The session produces a handful of vague observations but no real findings.

Fix: split large areas into smaller charters based on workflows, risks, user roles, or failure modes. "Explore promo code stacking when cart contains both subscription and one-time items" is a charter you can actually finish.

Confusing freedom with lack of discipline

A team calls their unstructured manual testing "exploratory" because it sounds better. There is no charter, no timebox, no notes. When someone asks what was tested, the answer is "we looked around." The work cannot be reviewed, repeated, or built upon.

Fix: if there is no mission, no evidence, and no follow-up action, it is ad hoc testing. That is fine sometimes, but do not confuse the two.

Measuring success only by bug count

A tester runs a careful exploratory session on a new feature and finds zero bugs. The session is dismissed as unproductive. But the session also confirmed that the hardest workflow path works correctly under three different user roles and two data configurations — information that gives the team real confidence before release.

Fix: bugs are one output. Others include clarified behavior, exposed weak requirements, confirmed stability in risky areas, and new regression candidates. All are useful.

Failing to turn insights into assets

A team runs exploratory sessions every sprint and finds recurring issues with state management after navigation events. Each time, the tester files a bug and moves on. No one creates a standing regression case for navigation state, and no one suggests automation for the pattern.

Fix: if exploratory testing repeatedly exposes the same type of problem, that knowledge should become a stronger test case, regression scenario, or automation candidate. The discovery is only half the value — the other half is making sure it stays discovered.

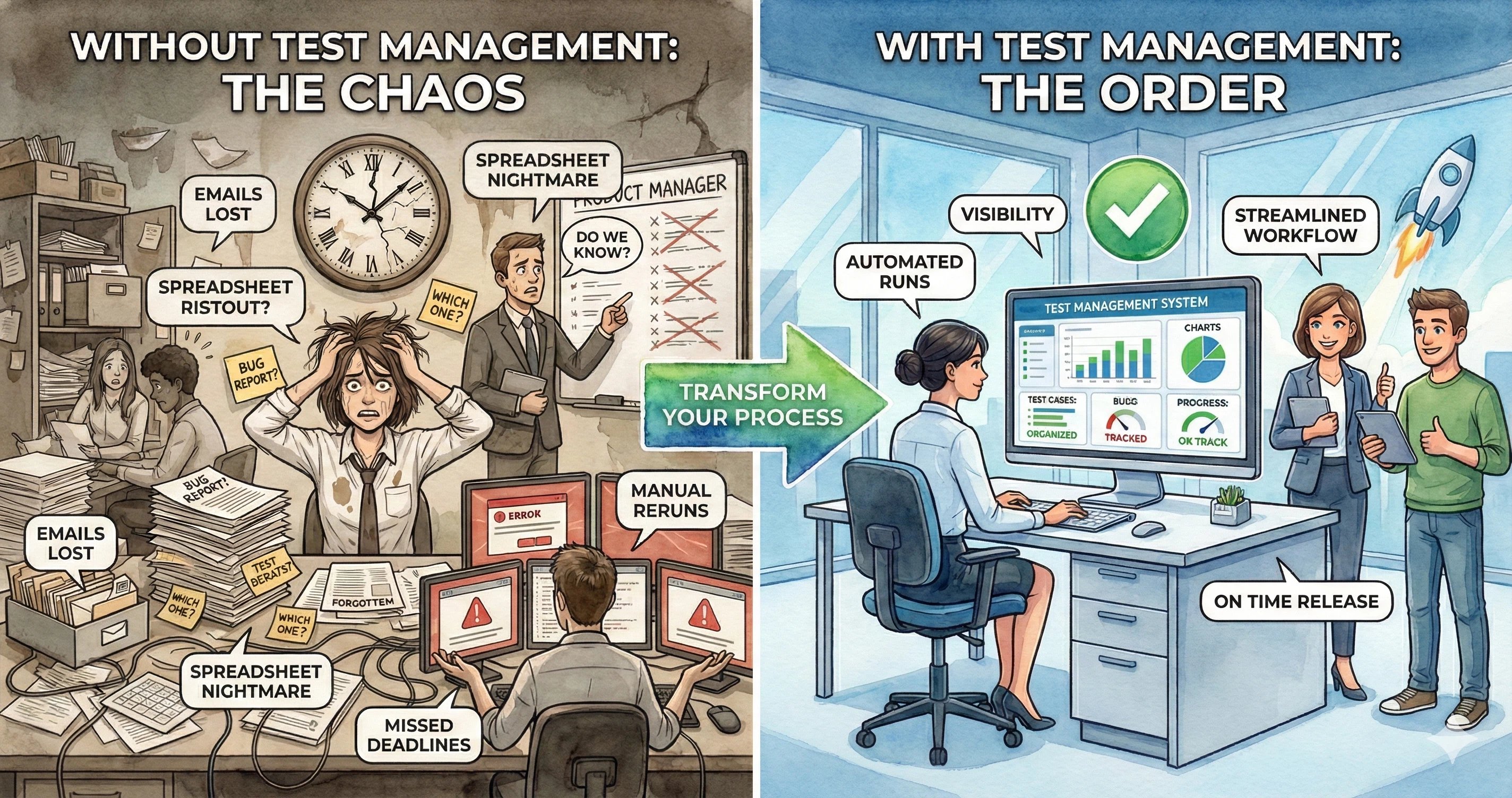

Keeping Exploratory Work Traceable

The biggest risk with exploratory testing is not the testing itself — it is what happens afterward. Findings scatter across sticky notes, screenshots, Slack threads, and disconnected bug tickets. Within a week, half the context is gone.

A test management system solves this by giving exploratory sessions the same operational structure as scripted testing: charters stored as test cases, sessions organized into runs, findings linked to issues, and results tied back to requirements. If your team uses QA Sphere, the workflow looks like this:

- Write charters as test cases. Use the description for the mission, preconditions for setup, and steps for focus areas. Link the relevant requirement if the charter is tied to a user story. Your exploratory charters live in the same test case library as your scripted cases.

- Create a dedicated test run. Group your charters into a focused run using the test run builder with a title, assignee, and milestone so every session has a clear owner and scope.

- Capture findings during execution. Update status (passed, failed, blocked), log time spent, and save observations as result comments while the context is fresh.

- Link defects without leaving the session. Create or attach Jira, GitHub, or Linear issues directly from the test result through the issue tracker integration. Developers get full context, and QA keeps a clean trail between the test and the bug.

- Review in debrief and promote coverage. After the session, decide what becomes a permanent regression case, what needs a follow-up charter, and which requirement gaps need team discussion. Because results are linked to requirements and issues, stakeholders can later ask which risky areas were explored, which failures are blocking release, and which exploratory findings turned into linked defects.

This is where exploratory testing stops looking informal and starts looking operationally mature. The testing finds the unknowns. The system makes sure they stay found.

Further Reading

If you want to go deeper into exploratory testing, these are worth your time:

- James Whittaker, Exploratory Software Testing (Addison-Wesley, 2009) — a practical book organized around "tours," a metaphor for systematically exploring different dimensions of an application.

- James and Jonathan Bach, Session-Based Test Management — the original paper on SBTM, which formalizes how to structure, track, and debrief exploratory sessions.

- Michael Bolton, DevelopSense blog — Bolton writes extensively on the distinction between testing and checking, and on how exploratory and scripted approaches relate to each other.

- Maaret Pyhäjärvi, Exploratory Testing Index — an organized index of hundreds of posts on exploratory testing from a practitioner with over 25 years of experience.

Final Thoughts

Exploratory testing is one of the highest-leverage skills in software testing because it helps teams discover what scripted coverage has not imagined yet.

Used well, it is not random clicking. It is focused investigation — the kind that turns a vague "something feels off" into a filed defect, a new regression case, and a requirement the team forgot to write down. The password reset example in this guide found two real bugs, one UX gap, and one requirements question in a single 45-minute session. That is typical.

The challenge is never the testing itself. It is keeping the work visible after the session ends. If your exploratory sessions are still spread across notebooks, screenshots, and disconnected bug tickets, consider giving them the same structure you give scripted tests: charters, runs, linked issues, and traceable results. That is what turns good individual testing into a repeatable team practice.

Written by

QA Sphere TeamThe QA Sphere team shares insights on software testing, quality assurance best practices, and test management strategies drawn from years of industry experience.