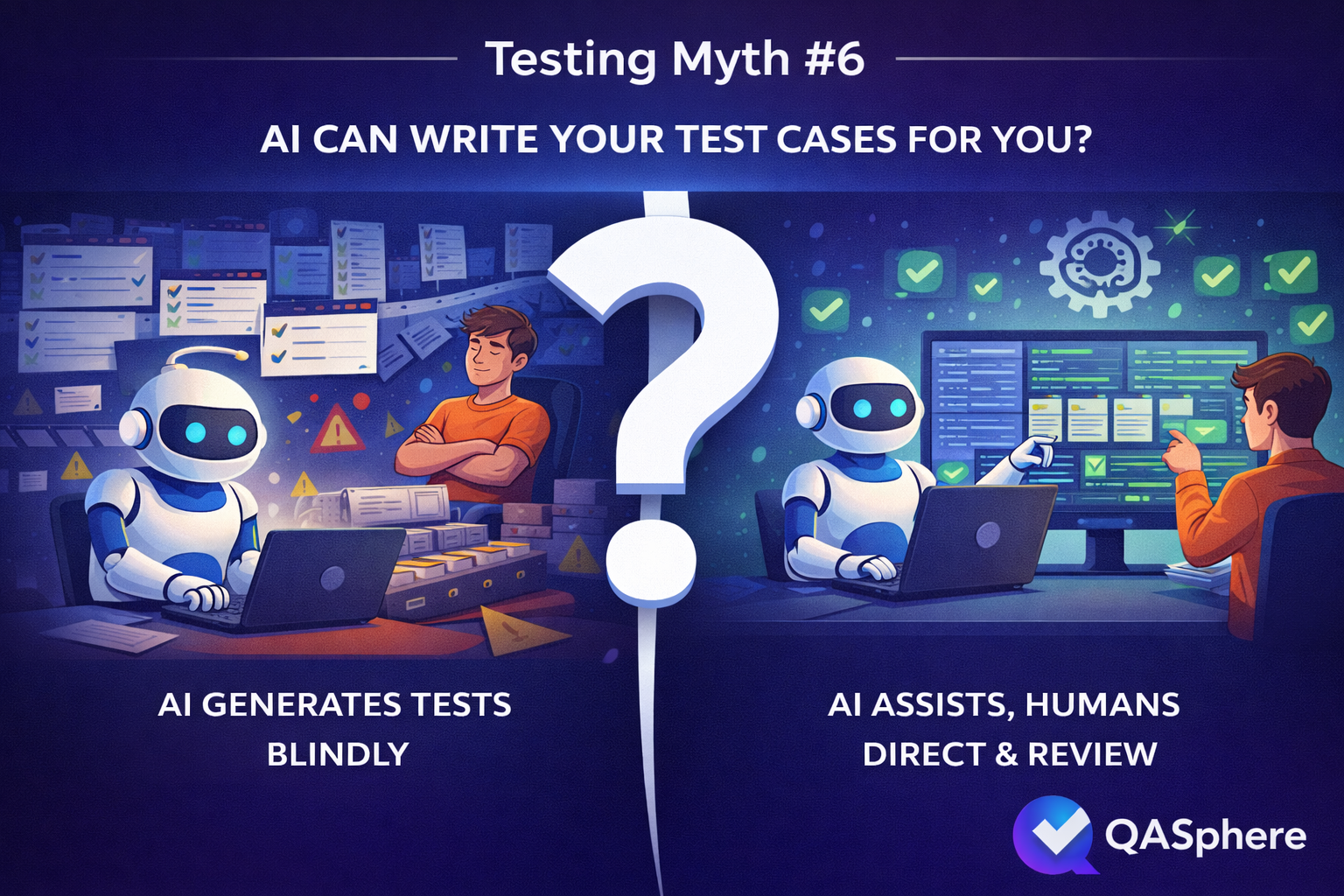

Testing Myths #6: AI Can Write Your Test Cases for You

Assisted creation, not delegated creation

This distinction matters for how QA teams should evaluate any tool that offers AI-generated test cases.

The question is not whether a tool can generate cases. Most can now. The question is whether generation happens inside a workflow that forces — or at least strongly encourages — human review, editing, and prioritization before the output becomes a real test asset.

AI test case creation is useful when it moves a team faster from rough idea to reviewable draft. That value is real. But it increases substantially when those drafts land immediately inside a structured environment where they can be edited, categorized, and connected to actual execution. Test case management is not a nice-to-have alongside generation; it is what separates fast drafting from fast drafting that compounds into a maintenance problem.

The same logic extends to what happens after execution. When generated cases flow into test runs, surface results in reporting, and connect through issue tracker integration, the team starts to accumulate evidence about which AI-assisted cases were worth keeping and which were noise. That feedback loop is how a team gets better at using AI over time, rather than just producing more output. The direction AI-Driven Test Case Creation points toward — faster creation with review still expected — is the right stance.

The teams getting actual value from AI in test case work are not the ones who asked AI to own the process. They are the ones who stayed in control of it: using AI to reduce friction, applying their own judgment to what that output becomes, and treating generation as the beginning of test case creation, not the end of it.

Written by

Satvik ChoudharySatvik Choudhary debunks common testing myths and misconceptions, helping QA teams separate fact from fiction in software quality assurance.