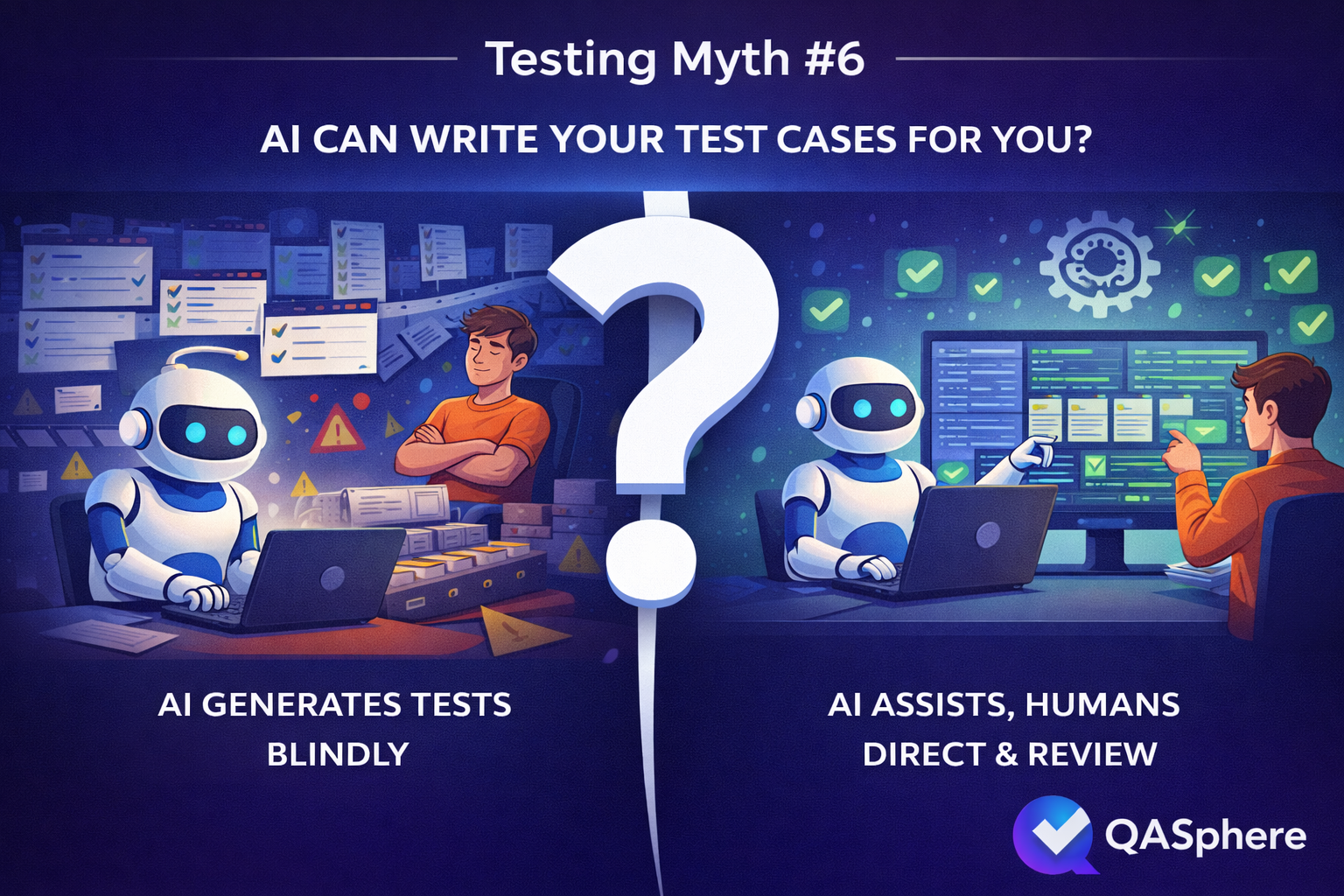

Testing Myths #6: AI Can Write Your Test Cases for You

Part of the Testing Myths series. Testing Myths #6.

Imagine a tester prompts an AI tool with: "Write test cases for the checkout flow." Within seconds, the output arrives. It has preconditions. It has numbered steps. It covers a successful purchase, an invalid card, an empty cart. It looks like a test case suite.

What it does not have: awareness that your checkout flow has a known edge case around gift card stacking that has caused three production incidents. It does not know that your largest customer segment skips the address validation step because of a legacy integration. It does not know which of its ten scenarios your team has any realistic chance of running this sprint. The output is plausible, polished, and missing the entire point.

That gap — between generated text and grounded testing — is the myth.

Where AI genuinely helps

It would be wrong to dismiss what AI does well here, and the strongest QA teams are not dismissing it.

AI is effective at reducing the friction of getting started. A tester with rough notes — a feature description, a ticket, a few bullet points about intended behavior — can use AI to expand that material into structured steps, suggest preconditions they may have skipped, and normalize language across a set of cases that would otherwise take half a day to write up. The productivity gain in that kind of assisted drafting is real.

AI is also useful as a format normalizer. When a team has test cases written in inconsistent styles across authors and sprints, a model can apply a standard template across the set quickly. It catches obviously missing fields. It can reframe vague expected results into more testable assertions. None of this requires deep product knowledge from the model — it is largely syntactic work, and AI handles syntactic work well.

The honest case for AI in test case work is: it lowers the cost of expression. The blank page problem, the inconsistent formatting problem, the "I know what I want to test but haven't had time to write it up" problem — AI addresses all of those.

Where AI falls short

It cannot supply context it was never given. This is the most fundamental limitation. A model generating test cases from a feature description produces test cases for a generic version of that feature, not your implementation of it. It does not know which architectural shortcuts your team took, which third-party integrations are fragile, which user paths have historically concentrated defects, or what your product's actual risk profile looks like. That context lives in your team's heads, your bug tracker, your production incident history, and your relationships with stakeholders. AI has access to none of it unless you explicitly provide it — and even then, it cannot weight that information the way a tester who has worked the product for a year can.

Polished output creates a review problem. This is subtler but just as serious. A draft that looks rough invites scrutiny. A draft that looks finished tends to get approved. AI-generated test cases are almost always well-formatted, which means errors in logic, false data assumptions, and steps that don't reflect actual system behavior are easy to miss on a quick pass. A precondition that sounds reasonable but contradicts how your environment actually works will sit in your suite quietly until it produces a false result that wastes two hours of someone's time to investigate. The polish is not a safety signal; in some ways it is a risk multiplier because it reduces the friction of acceptance.

Priority blindness compounds at scale. AI generates cases without any sense of what is worth executing now, what can wait, and what should not exist. Combined with how easy generation has become, this tends to push teams toward the same trap described in Testing Myths: More Tests Always Mean Better Quality: the repository grows faster than the team's ability to maintain it, and coverage metrics go up while actual risk coverage stays flat or declines. A large suite full of generic scenarios offers much weaker guarantees than a smaller, deliberately shaped one — but the large suite looks better in a report.

The right workflow

The teams getting real value out of this tend to arrive at the same conclusion quickly: AI works best when the team shapes the workflow around it instead of treating generation as the workflow.

If the tool is used because management saw a demo and decided test writing is now solved, the result is usually predictable: too many cases, too little review, and a suite that looks fuller than it is. If the team uses AI deliberately as a drafting assistant, the results are much better. The difference is not the model. It is the level of human ownership around it. That is also the spirit of Tariq King and Jason Arbon's StickyMinds interview on AI in testing: get in front of the change, do not hand the whole practice over to it.

What this looks like as a working process: a tester or lead defines the scope — which area, which change, what level of risk, what level of depth. AI drafts candidate cases or expands structured notes. Then the actual QA work happens: removing generic or duplicate scenarios, correcting incorrect assumptions, adding product-specific edge cases that the model could not have known, tagging and prioritizing by risk, connecting the cases to requirements, bugs, and runs, and deciding what belongs in active execution versus reference documentation. That review loop is not optional overhead. It is where AI output becomes trustworthy enough to act on.

If you want the practitioner version of that warning rather than the polished vendor version, the Ministry of Testing discussion on AI-generated test cases lands in the same place fast: irrelevant scenarios, hallucinated assumptions, and the need for human review.

For a deeper look at balancing AI tooling with human judgment in testing, The Human Approach to AI Testing covers the broader set of considerations.

Assisted creation, not delegated creation

This distinction matters for how QA teams should evaluate any tool that offers AI-generated test cases.

The question is not whether a tool can generate cases. Most can now. The question is whether generation happens inside a workflow that forces — or at least strongly encourages — human review, editing, and prioritization before the output becomes a real test asset.

AI test case creation is useful when it moves a team faster from rough idea to reviewable draft. That value is real. But it increases substantially when those drafts land immediately inside a structured environment where they can be edited, categorized, and connected to actual execution. Test case management is not a nice-to-have alongside generation; it is what separates fast drafting from fast drafting that compounds into a maintenance problem.

The same logic extends to what happens after execution. When generated cases flow into test runs, surface results in reporting, and connect through issue tracker integration, the team starts to accumulate evidence about which AI-assisted cases were worth keeping and which were noise. That feedback loop is how a team gets better at using AI over time, rather than just producing more output. The direction AI-Driven Test Case Creation points toward — faster creation with review still expected — is the right stance.

The teams getting actual value from AI in test case work are not the ones who asked AI to own the process. They are the ones who stayed in control of it: using AI to reduce friction, applying their own judgment to what that output becomes, and treating generation as the beginning of test case creation, not the end of it.