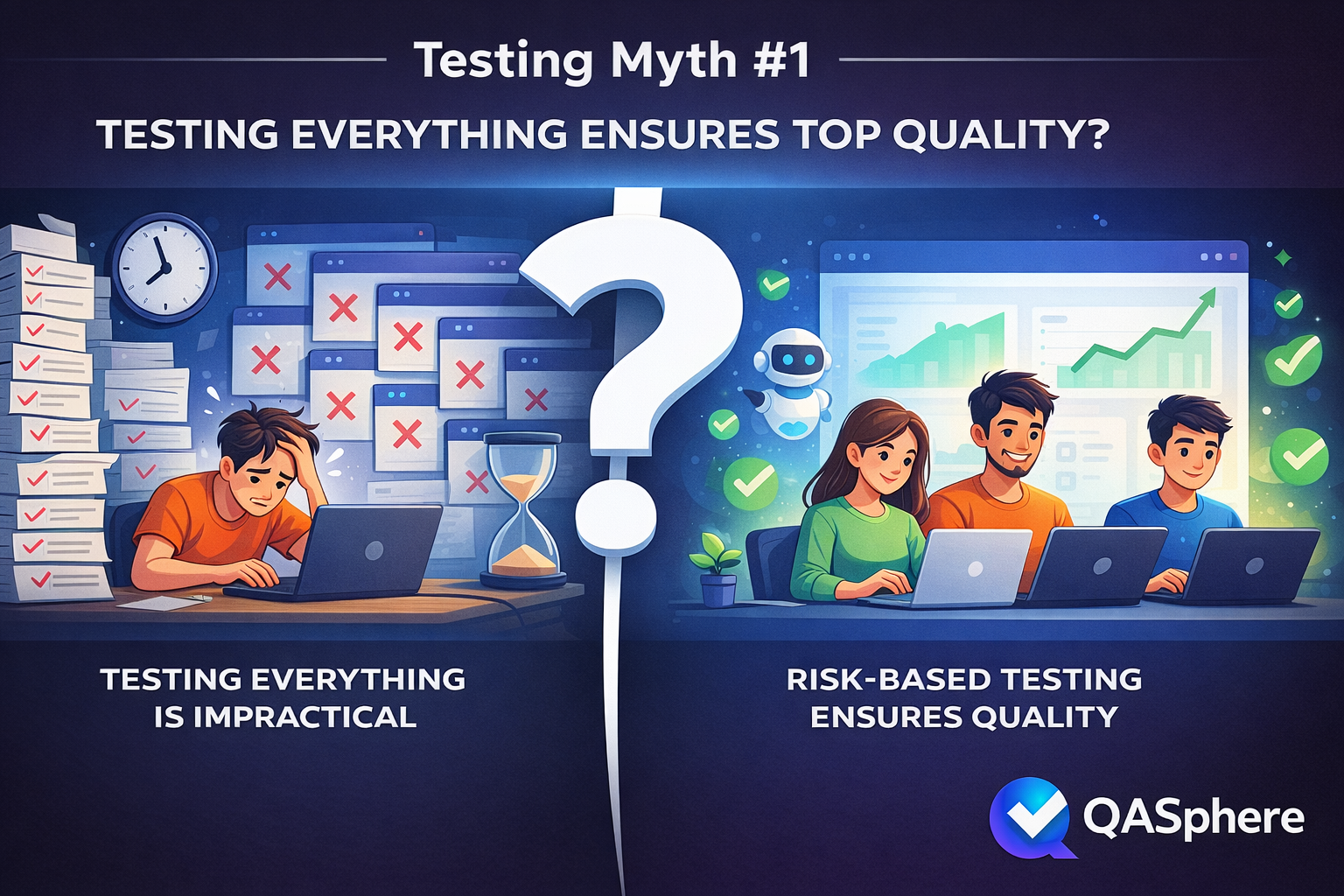

Testing Myths #1: More Tests Always Mean Better Quality

Part of the Testing Myths series. Testing Myths #1.

The myth sounds responsible: if some testing is good, more testing must be better. Here is why it is wrong.

Consider a team that has spent a year building out a large test suite — several hundred automated end-to-end checks, a thick manual regression pack, and a coverage report that shows above ninety percent. When a critical payments bug reaches production, the postmortem reveals that the suite ran over two thousand checks before that release and none of them touched the specific combination of currency conversion, session state, and payment provider fallback that caused the failure. The suite was enormous. The signal on that particular risk was zero.

This pattern is common enough that it has a name: the ice cream cone anti-pattern. Teams pile up slow, brittle end-to-end coverage while staying relatively thin on the faster unit and integration checks that would catch problems earlier and cheaper. The shape is upside down from where the confidence actually lives. More tests does not fix that. Often more tests makes it worse.

Volume is not signal

The fundamental problem is that test count is the wrong unit of measurement.

Every test produces an output of some kind — pass, fail, skip, error. But not every output is equally informative. A test that checks the same behavior as six other tests adds almost nothing to confidence. A test that runs on a stable path, against stable data, in a stable environment, and passes every time tells you that path is stable — which you already knew. A test that fails intermittently due to timing or environment teaches you nothing reliable at all.

The test pyramid makes the same point structurally. Fast unit tests at the base, a smaller layer of integration tests in the middle, and a deliberately thin layer of end-to-end tests at the top. The shape is not arbitrary. It reflects where feedback is cheapest, most stable, and most actionable. A team that inverts the pyramid — or simply piles on tests without thinking about where they sit — ends up with a suite that is expensive to run, slow to give feedback, and noisy when it fails.

What teams are actually after is not volume. It is signal: reliable information about whether a specific risk is addressed. More tests only increase signal if they cover distinct risks in reliable ways. Otherwise they increase cost and noise while the risk profile barely changes.

What teams actually get wrong

They add tests where tests are easiest to write

After a production incident, the natural response is to add a test that would have caught it. That is reasonable. The problem is that it generalizes into a habit. Teams add tests around the same flows — the ones that are easy to set up, easy to automate, easy to explain to stakeholders — and the hard-to-test areas stay thinly covered indefinitely. Integration boundaries, async behavior, permission edge cases, third-party failure modes: these are exactly the areas where bugs surface in production, and exactly the areas where suites stay sparse because the tests are inconvenient to write. Volume accumulates where it is easy to accumulate, not where it is most needed.

They confuse checking with testing

Mechanical verification and human investigation are not the same thing. Automation is excellent at checking known expectations. It is much weaker at surfacing problems nobody modeled in advance. A team that grows its automated suite without growing its exploratory practice gets more checks, not necessarily more understanding. That is a meaningful difference. Automating your way to safety only works when you already know what to look for, which is exactly where blind spots tend to hide.

They carry maintenance drag without noticing

Every test has a carrying cost. Automated checks break when the product changes. Manual cases go stale when features evolve. Long regression runs extend feedback cycles, which means bugs discovered later, triaged later, and fixed later. At some threshold — and teams often pass it without realizing — the suite stops increasing quality and starts taxing delivery. The test suite becomes a weight on the team rather than a tool. This is not an argument against testing. It is an argument for treating each test as a deliberate investment with a carrying cost, not as a permanent artifact that only needs to be added, never retired.

What to optimize instead

The goal of a test strategy is not to maximize tests. It is to maximize useful confidence per unit of effort.

That reframe changes the questions teams ask. Not: "How many tests do we have?" But: "What are we trying to learn before we ship, and what is the cheapest reliable way to learn it?" The answer depends on the change. A low-risk UI copy edit does not deserve the same workflow as payment logic, session handling, or a data migration with rollback implications. Matching effort to risk is not laziness — it is precision.

In practice, a healthier workflow starts by classifying the change. What changed? What could break downstream? Which parts are already well-covered by fast, reliable checks? Where does the team's understanding of the system get thin? Where have incidents come from before? Answers to those questions shape a suite that is genuinely proportional to risk rather than historically accumulated.

This is also where the unit/integration/end-to-end balance matters. A pyramid-shaped mix is not a rule to apply mechanically. It is a heuristic that reflects where fast, cheap, stable feedback tends to live. Teams that weight heavily toward the top of the pyramid pay for every test run in time and flakiness, and they get slower feedback when they can least afford it.

The same logic runs through how to think about manual testing vs automation. The decision is not a moral stance; it is a question about which tool gives the team reliable information fast enough to matter. Automation excels at regression checking, repetitive validation, and catching regressions on known behavior. Human exploratory testing excels at finding problems in areas where expected behavior is not yet well-understood. Both matter. Neither substitutes for the other. The ratio should reflect the work, not convention.

Suites that stay healthy also require active maintenance. Cases that no longer reflect product behavior should be archived. Cases with redundant coverage should be consolidated. Regression Testing With QA Sphere covers the practical side of keeping that discipline without losing coverage of what matters.

How QA Sphere supports this

The myth becomes most expensive when test repositories drift into storage rather than staying as decision support. Teams keep adding cases and lose clarity on what is current, prioritized, duplicated, or directly tied to release risk.

Test case management matters here not because it helps teams collect more tests, but because it gives structure that makes the difference between critical coverage and historical clutter visible. Tags, priorities, sections, ownership, and reusable steps let teams see what their suite is actually doing.

Test runs follow the same logic. A release should not force every team through the same full ritual every time. Risk-based runs, focused milestone sets, and targeted execution against specific changes are more useful than replaying everything. When those results connect to issue tracking and reporting, teams are no longer reporting testing activity — they are reporting release signal. That is a different and more honest conversation.

More tests do not make a product safer. Better evidence does.