AI in Software Testing: How It's Changing QA in 2026

A quick note before we dive in: When people hear "AI in software testing," the first thing that often comes to mind is AI writing code - unit tests, integration tests, functional test scripts generated straight from source. Tools like GitHub Copilot, Cursor, and ChatGPT have made this genuinely mainstream, and it's a big deal. But that's only one slice of the picture. This article focuses on the broader QA workflow: how AI is transforming test management, test case design, maintenance automation, defect prediction, and release confidence - the layer that sits above the code, where most QA teams spend most of their time.

The State of AI in Software Testing in 2026

AI in software testing is no longer a buzzword on conference slides. In 2026, it's a daily reality for QA teams of every size. From startups with three testers to enterprises running thousands of automated suites, AI is reshaping how tests are written, maintained, and prioritized.

The shift happened faster than most predicted. In 2023, AI-assisted testing was limited to a handful of expensive enterprise tools. By mid-2025, large language models had matured enough to generate meaningful test cases from requirements, user stories, and even raw code. Now in 2026, AI is embedded into test management platforms, CI/CD pipelines, and IDEs - and QA teams that haven't adopted it are already falling behind.

67% of QA teams now use at least one AI-powered testing tool - up from 21% in 2024.

But adoption isn't the same as understanding. Many teams are using AI for basic autocomplete in their test scripts without realizing the deeper capabilities available. This guide breaks down exactly how AI is changing software testing in 2026, what it can and can't do, and how to adopt it practically - without the hype.

7 Ways AI Is Used in Software Testing Today

AI isn't a single feature - it's a set of capabilities that touch almost every phase of the testing lifecycle. Here are the seven most impactful applications QA teams are using right now:

1. Test Case Generation

AI reads requirements, user stories, or code and generates structured test cases automatically - including edge cases humans often miss.

2. Test Maintenance & Self-Healing

When UI elements change, AI updates selectors and test steps automatically instead of breaking the entire suite.

3. Test Prioritization

AI analyzes code changes and historical defect data to determine which tests to run first - or which to skip entirely.

4. Defect Prediction

Machine learning models identify which code modules are most likely to contain bugs based on complexity, change frequency, and past defect patterns.

5. Visual Testing

AI-powered visual comparison goes beyond pixel-matching to understand layout intent, ignoring acceptable rendering differences while catching real regressions.

6. Log & Failure Analysis

When tests fail, AI classifies the root cause (environment issue, flaky test, real bug) so teams don't waste time investigating noise.

7. Natural Language Automation

Testers describe scenarios in plain English and AI translates them into executable test scripts - lowering the barrier to automation.

The most impactful? Test generation and self-healing - they save the most hours per sprint.

AI Test Case Generation: The Biggest Time Saver

Writing test cases is the most time-consuming task in manual QA. A typical tester spends 40-60% of their week creating and updating test cases. AI test case generation cuts that time dramatically.

How It Works

Modern AI test case generators use large language models to analyze inputs and produce structured test cases. The inputs can be:

- Requirements documents - AI extracts testable conditions from PRDs and user stories

- Application code - AI reads functions, API endpoints, or UI components and generates corresponding test cases

- Existing test suites - AI identifies coverage gaps and suggests missing scenarios

- Screenshots and wireframes - Multimodal AI generates test cases from visual UI designs

Real-world example: A QA team at a fintech company used QA Sphere's AI generation to create test cases for a new payment flow. From a single Jira user story, the AI generated 23 test cases in 45 seconds - including boundary conditions, error states, and accessibility checks that the team hadn't considered. Manual creation of the same suite would have taken ~4 hours.

What Good AI Generation Looks Like

Not all AI test generation is equal. The best tools produce:

- Structured output - proper test case format with preconditions, steps, and expected results

- Edge cases - boundary values, empty inputs, special characters, concurrent operations

- Negative testing - what happens when things go wrong, not just the happy path

- Traceability - each generated case links back to the requirement that inspired it

The worst tools? They produce generic, surface-level test cases that still need heavy human editing - negating the time savings.

Self-Healing Tests: The End of Flaky Automation

Test maintenance is the silent killer of automation initiatives. According to Capgemini's World Quality Report, test maintenance consumes roughly a quarter of QA team time - and in teams with large legacy automation suites, that number climbs even higher. The culprit? Brittle selectors and locators that break every time the UI changes.

The Problem

A developer renames a CSS class, moves a button to a different container, or updates a form field ID. Suddenly, dozens of automated tests fail - not because there's a bug, but because the test is looking for an element that's moved or been renamed. The QA team spends hours updating selectors, re-running tests, and verifying fixes.

How AI Solves It

Self-healing tests use AI to build a multi-attribute model of each UI element - not just a single CSS selector, but a combination of:

- Element text, label, and placeholder content

- Position relative to other elements

- Visual appearance and size

- Surrounding DOM structure

- Historical selector patterns

When the primary selector breaks, AI evaluates all available attributes to find the correct element and automatically updates the test. Self-healing engines report 70-90% success rates at resolving broken selectors without human intervention - though it's worth noting that selector breakage accounts for only about 28% of test failures overall, with timing issues, test data problems, and rendering failures making up the rest.

73% reduction in test maintenance hours reported by teams using self-healing automation.

Predictive Analytics: Testing Smarter, Not Harder

The most sophisticated application of AI in software testing isn't about running more tests - it's about running the right tests. Predictive analytics uses historical data to make intelligent decisions about testing strategy.

Risk-Based Test Selection

Instead of running your entire regression suite for every code change, AI analyzes:

- Code change impact - which modules and functions were modified

- Historical defect density - which areas have produced the most bugs

- Test failure correlation - which tests historically catch bugs in changed modules

- Developer risk profiles - new team members or unfamiliar codebases get more coverage

The result: a dynamically prioritized test suite that runs the highest-risk tests first and skips low-value redundant tests. Teams report 40-60% reduction in regression test execution time while maintaining or improving defect detection rates.

Release Readiness Prediction

AI can also predict whether a build is likely to pass or fail based on code metrics, commit patterns, and test trends - before tests even finish running. This gives QA leads early warning signals and helps teams decide whether to delay a release or run additional targeted tests.

What AI Can't Do (Yet)

AI is powerful, but it's not magic. Understanding its limitations is just as important as understanding its capabilities - especially before you restructure your QA team around it.

AI Doesn't Replace Human Judgment

AI can generate test cases, but it can't tell you whether your product feels right. Usability testing, exploratory testing, and testing against business context still require human expertise. AI doesn't understand your users' frustrations, your market position, or why a technically correct behavior might still be a terrible user experience.

AI Generates - Humans Validate

Every AI-generated test case needs human review. AI can produce false positives (tests that seem valid but test nothing meaningful), miss domain-specific business logic, or misinterpret ambiguous requirements. The 80/20 rule applies: AI gets you 80% there in 20% of the time, but the remaining 20% of quality still needs human attention.

Garbage In, Garbage Out

AI test generation quality depends entirely on input quality. Vague user stories produce vague test cases. Poorly documented APIs produce incomplete coverage. Teams that invest in clear, structured requirements get dramatically better AI output than teams that feed it rough notes.

Current Blind Spots

- Security testing - AI can assist, but dedicated security expertise and specialized tools remain essential

- Performance testing - load patterns, capacity planning, and performance benchmarks still need human design

- Cross-system integration - understanding how multiple systems interact in production requires architectural knowledge AI doesn't have

- Compliance verification - regulatory requirements need human interpretation and sign-off

How to Adopt AI in Your QA Workflow

Adopting AI in software testing doesn't require a big-bang transformation. The most successful teams follow a phased approach:

Phase 1: AI-Assisted Test Creation (Week 1-2)

Start with AI test case generation. Pick one feature area, generate test cases from existing requirements, and compare AI output against your manually written tests. Measure time saved and quality gaps.

Phase 2: Intelligent Test Prioritization (Week 3-4)

Integrate AI-driven test selection into your CI/CD pipeline. Start with a shadow mode that recommends which tests to run without actually skipping any. Build confidence in the AI's selections before enabling automatic pruning.

Phase 3: Maintenance Automation (Month 2)

Enable self-healing capabilities for your most brittle test suites. Track how many broken tests AI resolves correctly vs. incorrectly. Tune confidence thresholds based on your team's risk tolerance.

Phase 4: Predictive Analytics (Month 3+)

Once you have enough historical data, enable defect prediction and release readiness scoring. This phase requires at least 2-3 months of data from phases 1-3 to be accurate.

Key principle: Start where the pain is greatest. If your team spends most of its time writing test cases, start with AI generation. If flaky tests are your biggest problem, start with self-healing. Don't try to adopt everything at once.

Tools Leading the AI Testing Revolution

The market for AI testing tools has exploded. Here's how the major players compare across AI capabilities:

| Tool | AI Test Generation | Self-Healing | Predictive Analytics | Best For |

|---|---|---|---|---|

| QA Sphere | Built-in (unlimited) | - | AI insights | Manual QA teams adopting AI |

| Testim | Limited | Yes (strong) | Basic | UI automation self-healing |

| Mabl | Limited | Yes | Yes | End-to-end automation |

| Katalon | AI suggestions | Yes | Basic | Full-stack automation |

| Functionize | NLP-based | Yes (strong) | Yes | Enterprise AI automation |

| Copilot / ChatGPT | General (no QA context) | - | - | Ad-hoc script generation |

Why QA Sphere for AI Test Generation

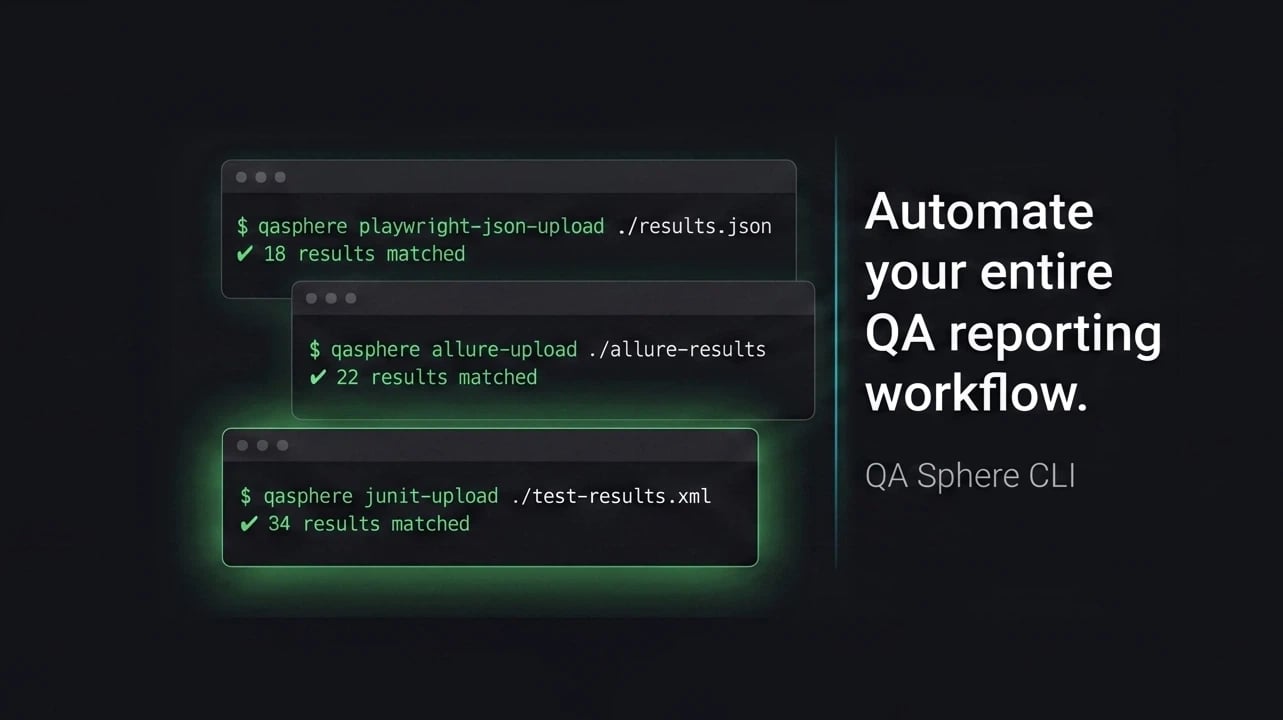

QA Sphere's AI is purpose-built for test case management. Unlike general-purpose LLMs or automation-focused tools, QA Sphere generates structured, categorized test cases that live directly in your test management system - ready to assign, execute, and track. There's no copy-pasting from ChatGPT, no formatting cleanup, and no lost traceability.

It also connects to your IDE via MCP (Model Context Protocol), so developers can generate and review test cases without leaving their coding environment.

The Future: What's Coming in 2027 and Beyond

AI in software testing is evolving fast. Here's where the industry is heading:

- Autonomous testing agents - AI that doesn't just generate tests but executes exploratory testing sessions independently, finding bugs without predefined test scripts

- Continuous test optimization - test suites that automatically evolve based on production telemetry, adding tests for real-world failure patterns and retiring tests that no longer provide value

- AI-powered test environments - synthetic data generation and environment provisioning driven by AI, eliminating the test environment bottleneck

- Multimodal testing - AI that tests applications the way users experience them, combining visual, functional, and performance evaluation in a single pass

- Shift-left intelligence - AI embedded in pull requests that predicts defect probability and suggests test cases before code is merged, not after

The bottom line: AI won't replace QA testers - but QA testers who use AI will replace those who don't. The role is evolving from test execution to test strategy, AI supervision, and quality advocacy.

Conclusion

AI in software testing has moved from experimental to essential. In 2026, the question isn't whether to adopt AI in your QA process - it's which capabilities to adopt first and how to do it without disrupting your current workflow.

The practical takeaways:

- Start with test case generation - it delivers the fastest ROI and requires the least infrastructure change

- Use self-healing for automation maintenance - if flaky tests are killing your confidence in CI/CD

- Invest in predictive analytics - once you have enough historical data to make it meaningful

- Keep humans in the loop - AI generates, humans validate, and the combination is better than either alone

- Don't chase hype - adopt AI where you feel the most pain, not where the marketing is loudest

The teams that thrive in 2026 and beyond are those that treat AI as a force multiplier - not a replacement for thinking. Start small, measure impact, and expand from there.

Ready to start? Try QA Sphere's AI test generation free and see the difference in your first sprint.

Written by

QA Sphere TeamThe QA Sphere team shares insights on software testing, quality assurance best practices, and test management strategies drawn from years of industry experience.